Continuous Integration: The Path to the Future for HPC

By Rob Farber, contributing writer

The Exascale Computing Project (ECP) is investing heavily in software for the forthcoming exascale systems as can be seen in the many tools, libraries and software components that are already freely available for download via the Extreme-Scale Scientific Software Stack (E4S). In order for these software investments to achieve their full potential, ECP is in the process of creating a concerted testing and continuous integration (CI) effort. Specifically, the ECP CI project automates building and testing of the ECP software ecosystem at various US Department of Energy (DOE) facilities (hereinafter referred to as Facilities). The proliferation of new machine architectures is positioning CI as an essential element of the nation’s high-performance computing (HPC) capability. Although this is a work in progress, the CI framework is already in use at several DOE facilities.

CI creates a win-win for scientists, users, and systems management teams. Through the use of automation and by allocating both people and machine resources, CI provides timely, well tested and verified software to users of the DOE Facilities, along with a more uniform and well tested computing environment. With ECP’s CI project, software is tested and verified across sites, meaning the software used by researchers will likely run correctly and in a performant manner at multiple facilities where a scientist may have a computing allocation.

Ryan Adamson, group leader for the HPC Security and Information Engineering Group (ORNL), and the ECP lead for Software Deployment at the Facilities, explains that “The scope of continuous integration is so broad that the process has to be automated so developers can see errors and fix them.”

The scope of continuous integration is so broad that the process has to be automated so developers can see errors and fix them. — Ryan Adamson, group leader for the HPC Security and Information Engineering Group (ORNL), and the ECP lead for Software Deployment at the US DOE HPC facilities

Figure 1 shows the breadth of software products that ECP addresses. For more information, see the article published on ECP’s website titled “The Extreme-Scale Scientific Software Stack (E4S): A New Resource for Computational and Data Science Research.”

Figure 1: As of February 2020, the ECP software technology (ST) products are organized into six software development kits (SDKs), which are the first six columns in the image. The rightmost column lists products that are not part of an SDK but are part of the Ecosystem group that will also be delivered as part of E4S. The colors denoted in the key map all the ST products to the ST technical area of which they are a part. For example, the xSDK consists of products that are in the Math Libraries Technical area, plus TuckerMPI, which is in the Ecosystem and Delivery technical area.

(Source: ECP Software Technology Capability Assessment Report.)

“Continuous” Enables More Productive Science

The “continuous” in “CI” indicates that the software is built and tested frequently and with fine granularity to prevent code changes from causing latent bugs in a development. Such errors are costly and can cause runtime errors and performance regressions in production. Detecting and correcting such errors after software has been released can waste tremendous amounts of human and machine time. In addition, after an HPC system upgrade, a CI framework can enable quick verification of the new software environment. Further, any issues the might occur as a result of changes to the system hardware/software by the testing Facility can be detected and addressed. The goal of the CI framework is to catch any bugs or performance issues before the latest software and hardware changes are accepted for installation on the production system.

The Time and Production Benefits are Staggering

Shahzeb Siddiqui, ECP Software Integration Lead from Lawrence Berkeley National Laboratory (LBL), observes that “The amount of developer cycles saved is tremendous. This also translates to significant production cycles saved through the identification of performance regressions and avoidance of software errors.”

The amount of developer cycles saved is tremendous. This also translates to significant production cycles saved through the identification of performance regressions and avoidance of software errors. — Shahzeb Siddiqui, ECP Software Integration from Lead at Lawrence Berkeley National Laboratory (LBL)

Even though the ECP CI framework is in its early stages, anecdotal reports of order of magnitude performance benefits for production workloads reflect the performance potential. David Rogers, the data science team lead on the Scale Team at Los Alamos National Laboratory, observes, “For the current workflows, we get approximately [a] 10× speedup in Spack build-from-source vs. Spack install-from-cache (~1 min vs. ~12 min). We are timing only the Spack build/install of Ascent and its dependent packages and at the moment are not including the build time of the application (Nyx), which is not currently cached.” [1]

CI Provides the Infrastructure to Efficiently Deploy Software across Current and Future HPC Centers

Tested and verified software is not a new concept, but the automated ECP framework for the CI of the E4S at multiple DOE sites is new. Paul Bryant, the lead for CI and Deployment at Oak Ridge National Laboratory (ORNL) states, “The goal is to have an automated software deployment for DOE. There are many, many pieces that have to work to meet that goal. CI provides the infrastructure that makes that process work.”

The goal is to have an automated software deployment for DOE. There are many, many pieces that have to work to meet that goal. CI provides the infrastructure that makes that process work. — Paul Bryant, Lead for CI and Deployment at ORNL

As a result of CI, the E4S software can be deployed across all the destination DOE supercomputer architectures providing a robust ecosystem for HPC users. Even better, these tested and verified software builds are free to download, so everyone in the HPC, enterprise, scientific, and commercial cloud communities benefit from this ECP effort.

This is a far-reaching benefit of the ECP effort. Access to prebuilt, tested, and verified software addresses a significant HPC issue among HPC developers. Access to the E4S software will likely become a mainstay of HPC development efforts and production runs, making CI an enduring legacy benefit of ECP.

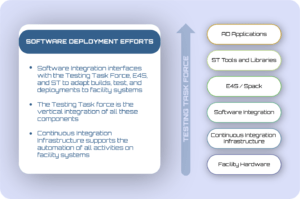

Figure 2 illustrates where CI fits in the general ECP software deployment effort. Generally, AD applications, as well as and ST (software technology) libraries integrated into the E4S stack are built by using Spack. After that, the CI infrastructure can take over to provide testing and verification on a variety of hardware platforms.

The Numbers are Growing

The number of build, test, and verify runs is growing weekly as reflected in the E4S Spack build cache which currently contains over 27,000 variants of the current ECP software components. The E4S Build Cache for Spack 0.16.0 webpage shows the current count and supported Linux and CPU releases.

E4S can be built for each operating system (OS) and OS release to support a variety of CPU and GPU architectures. Configurations with multiple GPUs per node and distributed MPI runtimes are also part of the binary cache inventory, adding even more complexity and testing time.

All this work is hidden from users because applications and libraries can be downloaded nearly instantaneously from the E4S Spack build cache. This means that production workflows, such as ExaWorks,[2] can automate provisioning. Various container types and builds from source capabilities via existing Spack build recipes are also available. This means that users can easily download and try the E4S software ecosystem for evaluation, as well as perform minimal container downloads to streamline the use of the E4S software components in production runs.

Addressing all the software commits (e.g., changes to the software) in the source of the many E4S software components can be resource intensive. The CI team reports that there are roughly 500 commits to Spack per month, [3] each of which can potentially trigger several facilities builds each of which can spawn up to 100 GitLab jobs per pipeline.

A Cohesive Support Model to Fix Software Issues

The intended benefit of continuous integration is that developers receive immediate feedback so errors don’t propagate throughout the source tree and become entrenched in stable releases.

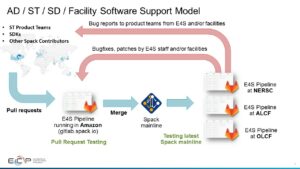

A schematic of the software support model is illustrated in Figure 3. The expansive nature of the CI effort requires a cohesive strategy to provide developers with feedback so they can fix the myriad of issues that will be detected when building, testing, and verifying multiple packages on many system configurations. In particular, Figure 3 shows the DOE facilities in which the software is tested, as well as the two feedback loops internal and external to developers.

Modern Tested and Verified Software is the Future of HPC and a Necessity for Exascale Environments

A beautiful thing occurs when developers and system teams can start using tested and verified current software releases almost instantaneously and without a significant time or machine investment. CI replaces the traditional HPC ad hoc per-site software build and integration with a more modern and much more efficient process.

While in the early stages, the ECP effort already provides people and machine resources at various institutions, such as those shown in Figure 2, to make installing a tested and verified current software release a low-risk, low-cost effort.

Adamson highlights the following use cases to help avoid stale software.

- Latest software use case: CI provides a mechanism that guarantees to the ST team that the latest software will work at supported institutions.

- Facilities stable use case: When apropos, the E4S team can specify when to pause and move to a more current version.

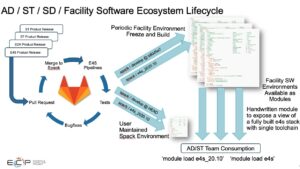

These use cases highlight how CI is the path to the future, not only for HPC but also for academic, enterprise, commercial, and cloud users. The availability of a consistent, scalable, cross-platform, tested, and verified E4S software stack checks all the boxes that computer scientists need to run current software at their facilities worldwide, both now and in the future. Figure 4 illustrates the software ecosystem life cycle.

Summary

With the proliferation of new machine architectures, CI is considered an essential requirement for software deployment in industry/commercial settings and HPC software is no exception. The ECP CI effort is a national asset that even in its current early stage is already delivering cross-platform tested and verified software for the US exascale effort. The benefits extend far beyond the DOE stable of supercomputers as the ECP tested and verified software can also be freely downloaded and used by the global HPC and enterprise scientific and user communities.

To listen to an interview with ORNL’s Ryan Adamson on Continuous Integration, click here.

Rob Farber is a global technology consultant and author with an extensive background in HPC and in developing machine learning technology that he applies at national laboratories and commercial organizations. Rob can be reached at [email protected]

[1] https://www.exascaleproject.org/the-extreme-scale-scientific-software-stack-e4s-a-new-resource-for-computational-and-data-science-research/

[2] https://www.exascaleproject.org/workflow-technologies-impact-sc20-gordon-bell-covid-19-award-winner-and-two-of-the-three-finalists/

[3] https://github.com/spack/spack/pulse/monthly