More-Effective Medicines, Better Batteries, and Greater Control over 3D Parts Creation Could Come in the Era of Exascale Computing

By Scott Gibson

With the arrival of exascale computing in 2021, researchers expect to have the power to describe the underlying properties of matter and optimize and control the design of new materials and energy technologies at levels that otherwise would have been impossible.

Erik Draeger, Lawrence Livermore National Laboratory

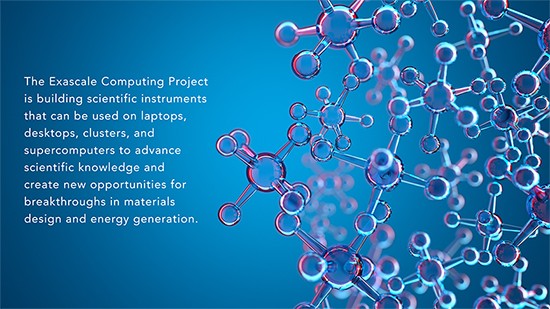

“Computer simulations have become an increasingly important tool in precisely understanding complex physics at the atomic and subatomic scales,” said Erik Draeger, the deputy director for Application Development in the US Department of Energy’s Exascale Computing Project (ECP). “By enabling simulations of unprecedented size and accuracy, the Chemistry and Materials application teams are advancing scientific knowledge and creating new opportunities in materials design and energy generation.”

Human Curiosity and Innovation

Exascale computing will be the modern-day tool for transcending what is known about the fundamental laws of nature, not only to advance science in general but also to pave the way to new quality-of-life-enhancing inventions.

“Exploring the fundamental laws of nature is something that humans have always done,” said Andreas Kronfeld, a theoretical physicist at Fermilab who leads an ECP project that is validating the fundamental laws of nature.

Kepler studied the motion of the planets, and then later Newton’s laws of motion and gravity provided the foundation for Kepler’s laws. Faraday’s experiments with electricity were intriguing discoveries that informed the development of future applications.

“In the end, understanding motion and electricity led to the industrial revolution,” Kronfeld said.

The next big fundamental breakthrough was the discovery of quantum mechanics about 100 years ago. Quantum mechanics is behind the transistor and all the electronics we use for the internet.

Andreas Kronfeld, Fermilab

Atomic physics came with quantum mechanics and was followed by about four decades of researchers exploring the structure of the atom and considering the nucleus, composed of protons and neutrons, to be a fundamental object.

During the 1960s and 70s, particles similar to protons and neutrons were discovered, which brought us to the work of today’s particle physicists who study the interactions between quarks and gluons (the field of quantum chromodynamics, or QCD), and between electrons and neutrinos. The next quest is for what may exist beyond those subatomic particles.

“There are things we observe in the lab that cannot quite be explained by the Standard Model of particle physics,” Kronfeld said.

The Standard Model is the framework that describes the basic components of matter and the forces that act upon them. But it doesn’t explain gravity, what dark matter is, why neutrinos have mass, why more matter than antimatter exists, why the expansion of the universe is accelerating, and other mysteries of particle physics.

Kronfeld believes pursuing such big science is important because it is an aspect of what makes us human, along with the fact that life-enhancing technological spinoffs follow.

Researchers use so-called lattice QCD calculations to explore the fundamental properties of subatomic nuclear physics. However, the compute power needed to solve the problems is very expensive, which makes the best-performing supercomputers so coveted.

“We can do QCD calculations with pre-exascale computers and even more complicated calculations with exascale computers and the scale that will come after that,” Kronfeld said. “We can drive this connection between QCD and nuclear physics, and so that’s why exascale computing is essential for the nuclear physicists engaged or participating in the ECP for lattice QCD.”

Widespread Breakthroughs

Besides being key to advancing the exploration of the fundamental laws of nature, exascale computing will drive breakthroughs in the design of biofuel catalysts, the use of molecular dynamics simulations, understanding materials at the quantum level, and the reduction of trial and error in additive manufacturing.

Jack Deslippe, Lawrence Berkeley National Laboratory

“ECP’s materials science and chemistry application teams working to harness exascale computing are using highly accurate methods to examine realistic materials for devices,” said Lawrence Berkeley National Laboratory’s Jack Deslippe, leader of the effort to develop ECP’s portfolio of chemistry and materials computer applications. “What they’re doing now was previously infeasible because of the large material sizes involved and the computational demands of applying the most accurate methods. The exascale computing and software advances underway are poised to reach unprecedented simultaneous levels of fidelity, system size, and time scales.”

Fast-Acting Chemistry

US Industry consumes about one-third of the nation’s energy, and 90 percent of industrial processes use catalysts.

“A catalyst’s job is to lower energy barriers so that the chemistry can happen faster and more energy efficiently,” said Theresa Windus, an Ames Laboratory chemist working on computer models for the catalytic conversion of biomass to fuels. “This is why catalysts are extremely important in industrial processes. If we can improve a reaction’s energy profile by 10 percent, we’ve just saved 10 percent of the energy consumption for that process.”

Turning biomass into fuels and beneficial chemicals makes great sense because biomass is renewable.

Theresa Windus, Ames Laboratory

“We can keep planting or growing algae colonies year after year and, if we’re smart enough to figure out how to do the conversions, be able to produce fuels and chemicals,” Windus said.

Researchers will use the chemistry computer models that Windus and her team are developing for exascale to predict how very large chemical and material systems evolve at the microstructural level—a capability that could have impact across the chemistry, materials, engineering, and geoscience fields.

She said the insights may help in the creation of more-effective medicines, the production of better materials for electronic devices and other items, the design of more efficient combustion engines, the development of more accurate climate change models, and a greater understanding of the transport and sequestration of energy by-products in the environment.

Mark Gordon, also an Ames Laboratory chemist, is leading development of a quantum chemistry applicationin ECP that is unique and adaptable to exascale computing in the use of what are known as fragmentation methods.

Mark Gordon, Ames Laboratory

“ECP is allowing us to enable the exploration of a host of very important problems,” Gordon said.

Biofuel development is just one of many examples, because it involves cellulose particles and a huge number of atoms that can be broken down and studied as small systems.

Things like polymers, proteins, enzymes, and drug molecules have very large molecular systems. The fragmentation method allows you to take those molecular systems and subdivide them into pieces.

“It’s not unusual for biomolecules or polymers to have 50,000 atoms, and the way this method, or algorithm, is constructed, each different piece or fragment is computed on a different node,” Gordon said. “Instead of doing a calculation on 50,000 atoms, we do it on a fragment that has only 50 atoms. So, the computing data bottleneck goes way down.”

He sees the fragmentation method as a great fit for exascale computing because it can take advantage of parallel computing on all of the different threads, or independent instructions.

Customizable Molecular Dynamics Simulations

Another way to get a handle on what’s happening at the atomic level is with molecular dynamics simulations. Like virtual experiments, they reveal how atoms in a material move in time and space and the kinds of reactions that occur along the way.

Danny Perez, Los Alamos National Laboratory

For example, molecular dynamics simulations can predict the damage that is done the first picoseconds after a fast, energetic, particle collides with a material—such as in a nuclear reactor—and then how this damage either accumulates or repairs itself. Such a capability has big implications for making advances in nuclear fission and fusion.

“These simulations give you a picture that would have been almost impossible to get otherwise,” said Danny Perez, a Los Alamos National Laboratory technical staff member at the helm of an ECP project created to expand the scope of molecular dynamics simulations. “Radiation damage is often created in a millionth of a millionth of a second or a few of those. Because we have full resolution with molecular dynamics, we can see exactly what each atom did and what led to the formation of the defects, which is very powerful.”

In biology, these simulations can be used to virtually screen drug compounds by making them interact with target sites on the surface of a virus. Chemists can use molecular dynamics to predict what kind of reactions should occur on a surface during catalysis.

“If it’s a problem where the physics occurs at the atomic scale, then there’s a good chance that you will learn something new with molecular dynamics,” Perez said.

As in the lattice QCD simulations, the chief drawback of molecular dynamics is the computational expense. Creating a computer model for a new set of interactions for a new system of atoms in a material is very time-consuming. And because atoms move extremely fast, simulations have to capture a great number of actions over tiny steps of time. Long, highly accurate simulations are very hard to do.

Perez’s project plans to bridge the gap between the capabilities of today’s standard molecular dynamics computer codes and the quantum mechanics calculations that materials scientists work with. He said the goal is to develop technical tools that will empower the scientists to decide the accuracy, length, and time of their molecular dynamics simulations and be ready to make the most of exascale computing when it becomes a reality.

The Rules of the Road

Developing new materials for improved batteries, sensors, computers, and other devices depends on being able to solve equations of quantum mechanics to predict the makeup and behavior of whole classes of materials while also giving the uncertainties of those predictions.

Paul Kent, Oak Ridge National Laboratory

“If we want to predict things like the ability of a material to conduct electricity, how it can store and release energy, whether it’s magnetic, or even what color it is, the rules of the road are given by quantum mechanics,” said Paul Kent, a senior research and development staff member at Oak Ridge National Laboratory (ORNL).

Kent leads a project that is using a computational approach named quantum Monte Carlo to do quantum mechanics calculations with minimal approximations.

“The field of materials moves quickly,” he said. “Last year a new superconductor, a neodymium nickelate, was discovered, and this material wasn’t known at the start of ECP. This is obviously something that we’d like to do calculations on. And perhaps there will be a new surprise material this time next year that we’ll want to apply the methods to.”

He believes exascale computing could provide the power and memory needed to test solutions and nail down the uncertainties of predictions.

One of the main things Kent hopes to accomplish is to get the highest performance on the coming exascale computing architectures from a single source code, so changing algorithms and collaborating with ECP teams designing software technologies is a very important part of what his project is doing.

“It’s simply not possible in terms of reasonable human effort to maintain different codes for different architectures,” Kent said.

He thinks innovative code design will pave the way for taking advantage of the unique features from each manufacturer of high-performance computing components.

Reducing Trial and Error

The time is also right for accelerating the qualification of metal parts made by additive manufacturing, according to John Turner, the computational engineering program director at ORNL.

John Turner, Oak Ridge National Laboratory

Additive manufacturing, or 3D printing, produces three-dimensional parts layer by layer in precise geometric shapes from data computer-aided-design (CAD) software or 3D object scanners. The parts and systems made using this approach can be both lighter and stronger, and can also have fewer components. Parts for a host of industries—from energy to aerospace and defense, health care, and more—are being created this way.

The focus of Turner’s project is on metal additive manufacturing where a very fine powder of metal (such as stainless steel, titanium, or other alloys) is blown or layered in a machine and then melted in precise locations to build up a part. He said the main advantage of additive manufacturing is in the ability to design with flexibility for a particular use.

At the current level of capability in the additive manufacturing industry, ensuring the required level of quality requires extensive trial and error. In addition, qualification is specific to a particular application and encompasses both the material itself and all of the aspects of the manufacturing process.

An advantage of additive manufacturing is that the processes involved are very repeatable. After optimal settings have been determined, the results of part creation can be very consistent. The downside is that the connection between the process parameters and the properties and behavior of the final part are not fully understood.

Turner said modeling and simulation powered by exascale computing, in combination with artificial intelligence and machine learning, could reduce trial and error and save energy while ensuring the necessary level of quality. In other words, parts could be ‘born qualified.’

“What we mean by born qualified is that we can take a set of requirements and create exactly the part we need and that it will behave just the way we expect it to,” Turner said. “It’s a pretty high bar, but that’s where we’re headed.”

“What we mean by born qualified is that we can take a set of requirements and create exactly the part we need and that it will behave just the way we expect it to,” Turner said. “It’s a pretty high bar, but that’s where we’re headed.”

The goal of Turner’s project is to create open-source software tools and high-fidelity data that will enable researchers to simulate the entire additive manufacturing process and optimize the parameters to produce parts with the desired performance.

“We want to tell the machine, ‘Make me a part that fits here, and that supports this load, for this many years’ and it will just do it,” Turner said. “We’re a long way from there right now, but the community is making rapid progress toward that goal.”

He believes having that level of control over parts creation will improve supply-chain efficiency in terms of just-in-time delivery and specific applications.

“You wouldn’t have to make a bunch of generic parts that hopefully cover all of the needs that you have,” he said. “You can make the specific part you need, when you need it.”

The Aftereffects of Success

“Exascale will bring highly accurate advanced quantum chemistry approaches like quantum Monte Carlo, what’s known in the field as coupled cluster with single, double, and triple excitations, or CCSD(T), and highly accurate molecular dynamics simulations of realistic, complex materials,” Deslippe said. “Using those approaches, researchers will be able to examine thousands, tens of thousands, and hundreds of thousands of atoms, respectively, with milli-second time scales for molecular dynamics. This will enable the field to apply the highest fidelity methods to the task of designing next-generation energy storage and production devices that rely on complex underlying material geometries like reaction sites, defects, and interfaces.”

“I believe this work will give researchers unprecedented new capabilities to understand and manipulate chemical and material properties,” Draeger said. “And because the teams are targeting science at all scales of computing, from laptops to clusters to the next generation of supercomputers, these tools will open many new avenues of discovery and exploration. I expect this work will seed innovations in science and engineering that will endure long past ECP.”