ECP Advances the Science of Atmospheric Convection Modeling

By Coury Turczyn

Researchers supported by the US Department of Energy’s (DOE’s) Exascale Computing Project (ECP) have integrated the promising super-parameterization technique for modeling moist convection into the Energy Exascale Earth System Model (E3SM), which is a global climate modeling, simulation, and prediction project being developed by DOE. This method enables E3SM to significantly improve cloud resolution while also reducing compute time and cost.

Using an algorithmic approach known as the multiscale modeling framework (MMF), the E3SM-MMF code leverages the power of GPU-accelerated supercomputers. For the ECP project, the team initially adapted E3SM-MMF to run on the GPU-equipped Summit petascale supercomputer, which is operated by the Oak Ridge Leadership Computing Facility at Oak Ridge National Laboratory (ORNL). Their results were published in The International Journal of High-Performance Computing Applications last year. Now the team is optimizing E3SM-MMF V2 to run on the Frontier exascale system, which is 7 times more powerful than Summit.

“Much of the technology developed in E3SM-MMF is already being used in the E3SM model and by the wider DOE climate modeling community. Longer term, we expect the E3SM-MMF approach to become the workhorse climate model for GPU-accelerated systems after the arrival of Frontier,” said Mark A. Taylor, chief computational scientist for E3SM at the Center for Computing Research at Sandia National Laboratory.

Integrating these higher-resolution cloud representations into E3SM will lead to more accurate predictions of changes to the Earth’s water cycle, its cryosphere (the frozen parts of the Earth’s water system), and biogeochemistry cycles—all of which are influenced by moist convection.

“The representation of moist convection and associated cloud formations is often identified as one of the major sources of uncertainty in our ability to understand how the Earth’s climate will change due to human activities,” Taylor said.

The MMF configuration solves a throughput issue for E3SM’s ability to resolve clouds, which typically require 1–3 kilometers of grid spacing in simulation models. However, E3SM’s global model usually runs at 25–100 kilometers of grid spacing to obtain simulation rates (throughput) of better than 5 simulated years per day of computation. This coarser resolution allows for several hundred years-long simulation campaigns to be completed in a reasonable timeframe.

Brute-force refinement of the current 28 km simulations down to 1 km horizontal grid spacing to explicitly resolve clouds would require on the order of 20,000 times more computations to achieve the same time length of climate simulation. Roughly 1,000 times comes from the increase in the number of total grid points, and another roughly 20 times comes from the smaller time step size needed to properly resolve the dynamics.

“As we spread to more parallel nodes to try to get the computation to run faster, eventually the parallel overhead starts to take over, and we get no benefit from increasing parallelism—we cannot make the model run faster. We recognized that we needed a different algorithmic approach than just a straightforward grid refinement,” said Matt Norman, leader of the Advanced Computing Life Science & Engineering group at the National Center for Computational Science at ORNL, who oversees ORNL’s ECP-funded efforts to optimize the E3SM-MMF for GPU-equipped supercomputers.

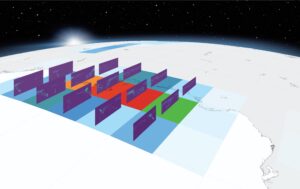

E3SM-MMF replaces E3SM’s atmospheric convection parameterization with a 2D cloud-resolving model that runs within each grid point. This keeps the global model running at 25–100 km resolutions while the MMF convection models run at 1–3 km. The coarser resolution for the global model allows for several hundred years-long simulation campaigns to be completed in a reasonable timeframe while also significantly improving cloud resolution. Image: ECP/E3SM-MMF.

The MMF algorithmic approach replaces E3SM’s atmospheric convection parameterization with a two-dimension cloud-resolving model that runs within each grid point and keeps the global model running at 25–100 km resolutions while the MMF convection models run at 1–3 km. These cloud-resolving models do not exchange data directly with each other, so parallel overheads are significantly reduced. This allows researchers to scale E3SM-MMF to run very large simulations while still meeting throughput requirements.

“These MMF simulations are often 100 times more expensive to run than the traditional climate-modeling approach. So, clearly, we could never do that without some sort of acceleration. Summit and Frontier, with their GPUs and the way they set up their nodes, were great targets for this,” Norman said.

The largest E3SM-MMF benchmark conducted by the team for their study ran on 4,600 Summit nodes, and the GPU-ported code netted 5.38 petaflops of compute throughput.

Beyond throughput increases, the team found that E3SM-MMF also improves the modeling of related water-cycle phenomena such as mesoscale convective systems (MCS), which are organized complexes of convection that can span over 1,000 km. In addition to strongly influencing local water cycles, they also create extreme weather events, such as flooding, and roughly half of all tropical rainfall comes from MCS.

Throughput and representation of convection systems will only improve as the team optimizes E3SM-MMF V2 for Frontier. One big change will be advancing the code from one-moment microphysics to two-moment microphysics.

“One-moment microphysics can evolve the mass of various types of water and hydrometeors, such as hale, graupel, snow, and cloud ice. But when we move to two-moment microphysics, we evolve mass and what we call number concentration, and it allows for better representation of different sizes of hydrometeors, how they aggregate, and how they heat and cool the atmosphere,” Norman said.

The team aims to fully integrate MMF into the E3SM model, making it part of the next full version release.

OLCF is a DOE Office of Science user facility.

Related Publication

R. Norman, D. A. Bader, C. Eldred, W. M. Hannah, B. R. Hillman, C. R. Jones, J. M. Lee, L. R. Leung, I. Lyngaas, K. G. Pressel, S. Sreepathi, M. A. Taylor, and X. Yuan, “Unprecedented cloud resolution in a GPU-enabled full-physics atmospheric climate simulation on OLCF’s Summit supercomputer,” The International Journal of High Performance Computing Applications 36, no. 1 (July 2021): 93–105, https://doi.org/10.1177%2F10943420211027539.

UT-Battelle LLC manages Oak Ridge National Laboratory for DOE’s Office of Science, the single largest supporter of basic research in the physical sciences in the United States. DOE’s Office of Science is working to address some of the most pressing challenges of our time. For more information, visit https://energy.gov/science.

This research was supported by the Exascale Computing Project (17-SC-20-SC), a joint project of the U.S. Department of Energy’s Office of Science and National Nuclear Security Administration, responsible for delivering a capable exascale ecosystem, including software, applications, and hardware technology, to support the nation’s exascale computing imperative.